Introduction

Embodied AI systems need more than visually plausible 3D content. They need assets and environments that can be executed in simulation, manipulated by robots, and transferred to real-world tasks.

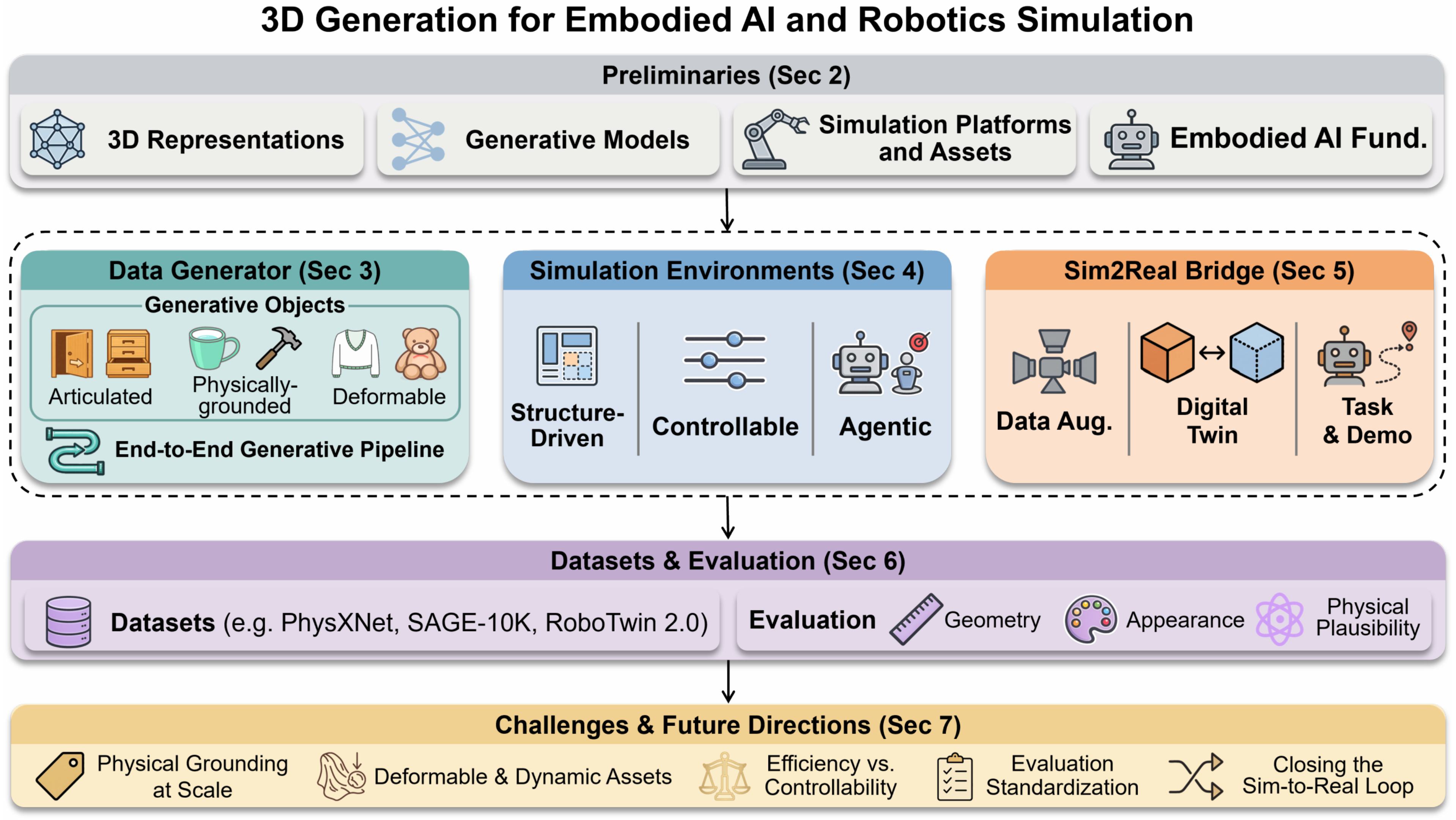

This survey treats 3D generation as infrastructure for embodied learning rather than only a visual synthesis problem. The central question is simulation readiness: whether generated objects, scenes, and reconstructions are physically grounded, kinematically executable, semantically controllable, and simulator compatible.

Why It Matters

Robotic training pipelines are bottlenecked by the scarcity of scalable, interaction-ready 3D content.

Core Lens

Methods are judged by physical deployability, not just geometric or visual realism.

Scope

The survey spans asset generation, environment construction, and sim-to-real transfer.

Taxonomy

We organize the field around three complementary roles. Together they cover the path from simulation-ready asset creation, to executable world construction, to real-world transfer.

Data Generator

Generate articulated, physical, deformable, or end-to-end simulation-ready assets.

Simulation Environments

Compose interactive scenes for navigation, manipulation, and agent training.

Sim2Real Bridge

Reconstruct and augment real-world instances for transfer-oriented pipelines.

Data Generator | Simulation-ready 3D Assets

This branch studies how to generate objects that can be dropped into physics engines without heavy manual cleanup. The key requirement is physical deployability, not only appearance quality.

Representative Asset Types

- Articulated objects with plausible joints and kinematics

- Physically grounded rigid objects with mass and material attributes

- Deformable objects such as cloth, ropes, and soft bodies

- End-to-end pipelines that export URDF or MJCF-ready assets

Main Tension

- Learning-based models provide strong geometry but need physical annotations

- LLM/VLM-based systems offer better semantic flexibility and open-vocabulary control

Simulation Environments

Here the target is no longer an isolated object but a full interactive world that supports perception, navigation, manipulation, and task execution.

Generation Modes

- Structure-driven generation from procedural rules or learned layout priors

- Controllable generation from language, vision, or physics constraints

- Agentic generation with planning, tool use, and simulation feedback

Practical Focus

- Scene composition must remain semantically coherent and physically executable

- Recent systems improve robustness by iteratively correcting failed layouts

Sim2Real Bridge | Transfer-oriented Pipelines

This branch focuses on reconstructing and augmenting specific real-world instances so that simulation and deployment form a tighter feedback loop.

Three Stages

- Digital twins for physically faithful reconstruction

- 3D-grounded data augmentation across view, geometry, and time

- Task and demo generation for scalable policy learning

Key Distinction

- These methods target real instances rather than novel category-level assets

- Real observations feed simulation, and synthetic data feeds deployment

Example

Data Generator

Visual examples from representative data generation methods. Use the top-level tabs to switch between articulated object generation and physically-grounded object generation, then select a specific method below.

Simulation Environments

Visual examples from representative scene generation methods. Select a method to view its demonstration.

Sim2Real Bridge

Representative results from three sim-to-real transfer pipelines. Select a method to view its demonstrations.

Collections

The collection below is directly organized from the survey tables. Each major branch keeps its own subcategory filter, search box, and expand control so the resource list is easier to browse.

Datasets & Evaluation

We summarize datasets and evaluation protocols along three resource groups: object assets, scene datasets, and robot demonstrations. The dataset entries below are searchable and link directly to their official pages.

Dataset Scope

40 curated benchmarks spanning object assets, indoor scenes, and robot demonstrations.

Evaluation Levels

Metrics are organized by geometry quality, physical sim-readiness, and downstream embodied performance.

Usage Goal

Readers can browse benchmarks by group, filter by category, and jump straight to each dataset page.

Evaluation

Geometry & Appearance

Goal: fidelity and visual alignment

- CD / EMD / F-Score / IoU for geometric reconstruction quality

- FID / CLIP Score for rendered appearance and semantic consistency

- Watertight Ratio for simulator importability

Physical Plausibility

Goal: simulation readiness

- Stability Rate for rigid-body validity under gravity

- Joint Type / Axis / Origin / Limit for articulation correctness

- Collision-Free Ratio / Penetration Volume for assembly feasibility

- Material Error for physical property prediction

Embodied Task Performance

Goal: downstream deployment

- Grasp SR / Articulation SR for object-level deployment success

- Navigation SR / SPL for environment usability

- Task Completion Rate for task-conditioned evaluation

- Sim-to-Real SR for end-to-end transfer quality

Citation

If you find our work useful in your research, please consider citing:

@misc{ye20263dgeneration,

title = {3D Generation for Embodied AI and Robotic Simulation: A Survey},

author = {To be updated},

year = {2026},

howpublished = {arXiv preprint},

note = {To be updated}

}